Google just dropped Gemma 4. You can run it on your phone.

Google DeepMind released Gemma 4 this week and it changes the equation for anyone running AI locally.

It’s ranked #3 in the world for open models, right behind Kimi K2 and GLM. The difference? Those two are massive. Gemma 4 is a fraction of their size and sitting right there with them.

Three things that matter:

It costs nothing. No API bills, no subscriptions. Download it, run it on your machine, done. If you’re paying for AI tools to handle routine tasks like summarising, monitoring, or pulling data, this does the same thing for free.

100% private. Nothing leaves your device. No data sent to Google, no data sent to anyone. If you work with client data or anything you wouldn’t paste into ChatGPT, this is the answer.

It runs everywhere. Your laptop, your desktop, even your phone. Two commands to get started with Ollama: install it, then ollama pull gemma4. That’s it.

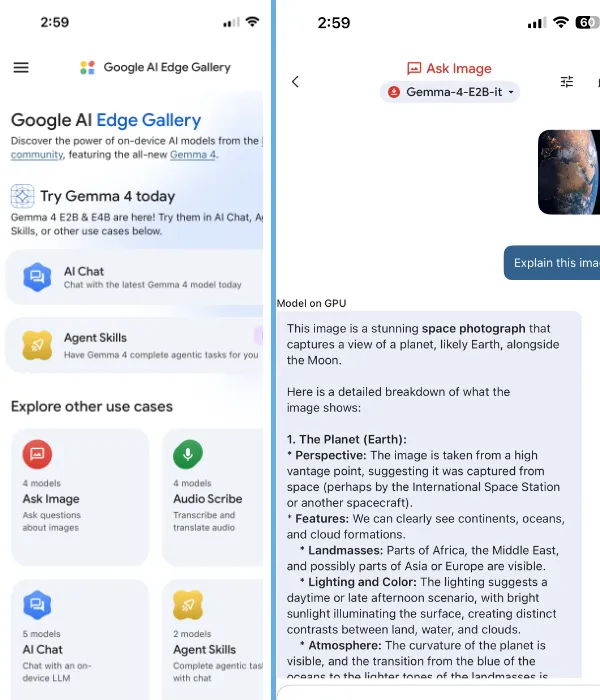

You can also run it through LM Studio, Hugging Face, or on your phone with the Google AI Edge Gallery app (available on iOS too). The mobile app runs Gemma 4 entirely on device with image recognition, voice transcription, and Wikipedia grounding. Fully offline.

The smart play here is a hybrid setup. Keep Claude or whatever premium model you use for the hard stuff; complex reasoning, content strategy, anything where quality really matters. Route everything else to Gemma 4 running locally. Two brains, one machine, and your API bill drops significantly.

I’m swapping out my current local model on my Mac Mini for the 31B. Same memory footprint, better performance, and it’s released under Apache 2.0 which means genuinely open source with no usage restrictions. Will report back once I’ve run it through real workloads.