The Next Wave of Search Is Crawling Markdown

Most AI visibility tools tell you which bots are visiting your site.

That’s log analysis. Yawn! Useful, but not new.

I’m running a different experiment.

Cloudflare has a beta feature called Markdown for Agents. When a client sends an Accept: text/markdown header, Cloudflare converts the page to clean markdown on the fly. No navigation, no JavaScript, no noise. Just content.

The key detail: The bot has to specifically request markdown. It doesn’t happen automatically. Coding agents like Claude Code already send this header. The major crawlers (GPTBot, PerplexityBot) largely don’t, yet.

I enabled it and built a tracking layer on top to log every markdown request: user agent, timestamp, path, frequency.

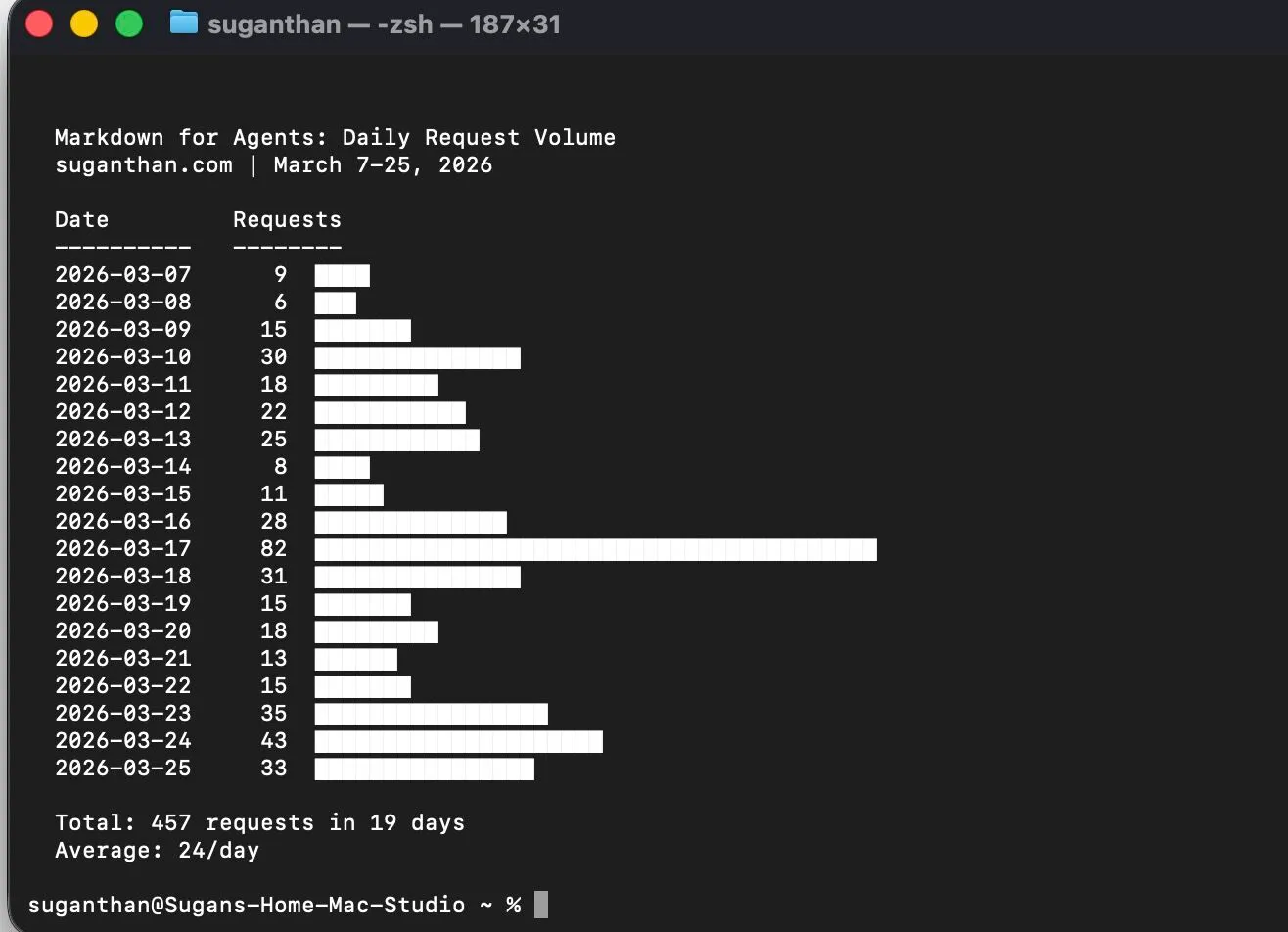

18 days in. 457 requests. Average 24/day.

Then on day 10, something interesting happened.

A single crawler hit every page on my site. Systematically. One page every 50 seconds.

This is not Googlebot. This is the next wave of search, and they’re already crawling.

It rotated between three different user agent strings (Windows Chrome, Mac Chrome, Linux Chrome) but the timing pattern was identical. Classic headless browser pool.

The user agents weren’t any of the known bots. No “GPTBot.” No “PerplexityBot.” No “ClaudeBot.” Just standard Chrome UA strings requesting markdown specifically.

Somebody’s retrieval pipeline has already adopted this standard and is building an index of sites that serve markdown to AI.

The question I’m trying to answer: if you make your content easier for AI to consume, do they consume more of it? And does that translate into citations, visibility, or traffic from AI search?

Too early to say. But something is definitely paying attention.

Full write up with the data coming in a few weeks with my findings. If you want it when it drops, sign up to my newsletter.